DesignHammer has a long relationship with Drupal, Jenkins, CasperJS, and PhantomJS. You can read through the history of the project in our previous blog posts:

- Using CasperJS, Drush, and Jenkins to test Drupal

- Testing Drupal data migrations with CasperJS

- Speed up Drupal content testing through Jenkins parallelization with Build Flow

- Building a Groovy Pipeline

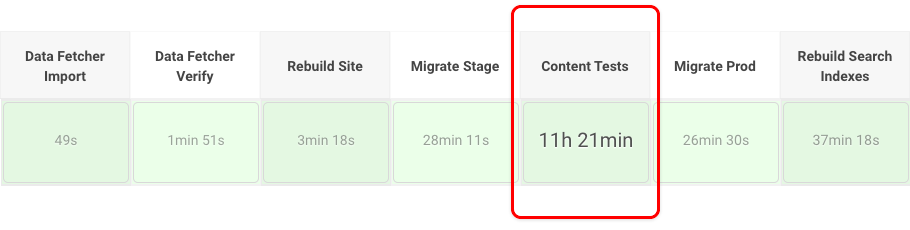

In 2017, the maintainer of PhantomJS (the headless browser which powers our content tests), stepped down from the project leaving it unmaintained. Over the last few months, we started experiencing very long build times (over 11 hours!) for our pipeline, specifically during the CasperJS/PhantomJS test suite. After a whole lot of troubleshooting, we narrowed the problem down to a concurrency issue when running multiple instances of PhantomJS causing hangs.

Since an update for PhantomJS was certainly not forthcoming, we decided to take the plunge and completely rewrite the content tests.

We had several goals with rewriting the system:

- Run faster We needed to fix the excessively long build times which were causing recurring build failures. We also wanted to try and shave off some of the time the content tests take when running normally as they have always been the longest portion of the build.

- Better logs and errors We wanted to make the test failure messages more clear and reduce the logspam for passing tests (6 lines per test, 21,000 tests!).

- Simpler For obvious reasons we wanted to reduce the footprint of the test suite. This meant writing less code, using fewer dependencies, and relying on a simpler concurrency model.

- Report, not fail Most test failures are due to simple typos that are easily corrected and not particularly impactful. Test failures should be logged and reported but should not cause the build to fail entirely.

To accomplish these goals we rebuilt the content test suite using JSDOM. JSDOM provides a pure-Javascript implementation of the DOM without actually implementing all of the components required for a full-featured web browser. Before choosing JSDOM, we considered simply replacing PhantomJS with Headless Chrome or another modern scriptable browser. However, our tests do not rely on testing browser behavior. Rather, we care about making sure the server has rendered the source data properly. We need to fetch a page from the site and then query the DOM of that page to find content (titles, links, citations, etc.) and compare it to the ground truth from our source data. JSDOM gives us that capability without a lot of additional overhead.

We then re-implemented the individual CasperJS tests using the Chai assertion library. We’ve used Chai on several other projects, typically alongside the Mocha or Karma unit-testing frameworks, and like the readability of its expect() assertions. For this project, we skipped the unit testing framework and just wrapped the assertions.

An example test using JSDOM and Chai looks like:

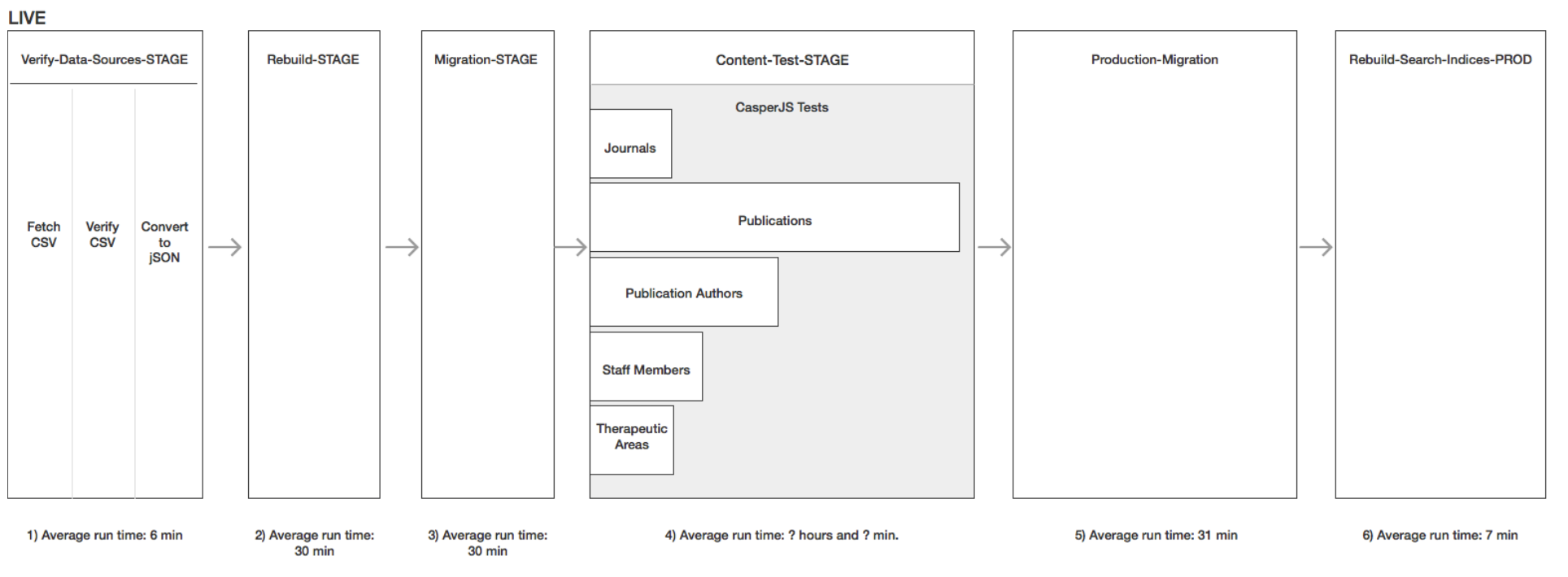

We have a test file like this for each type of content we are testing. A main.js file imports these content-specific tests and builds a set of jobs. In total, the system generates ~21,000 jobs. Each one is a function that loads a page on the target website into a JSDOM object and runs a set of assertions on the content using Chai. Errors or assertion failures are caught and logged.

We then push these jobs into a queue and run it. We use the queue package for this. The queue is run with a concurrency of 4. This is a big speed win because, if you recall from a previous post, we had been using the Groovy pipeline to handle parallelization of the content tests broken down by content type. We ran multiple content types at once, but within a single content type, the tests ran serially. This meant that the content tests could never run faster than the longest individual content test.

By building a queue of ALL jobs and running them concurrently we were able to preserve concurrency throughout the entire build resulting in a major speedup.

The system summarizes the results of the tests and writes them to a log file that is linked in Slack and email notifications. It is easy to just click the link and see the failures without having to scroll through thousands of log lines. The summary also contains details about resource usage and timing information for the tests so we can get granular data about how long the tests take to run and how much memory they consume.

The new structure also ensures that test failures no longer cause the build to fail. Now, errors are logged but the build continues. We can review the error log and resolve content issues as they are identified but simple typos or character encoding problems will not prevent content from being imported.

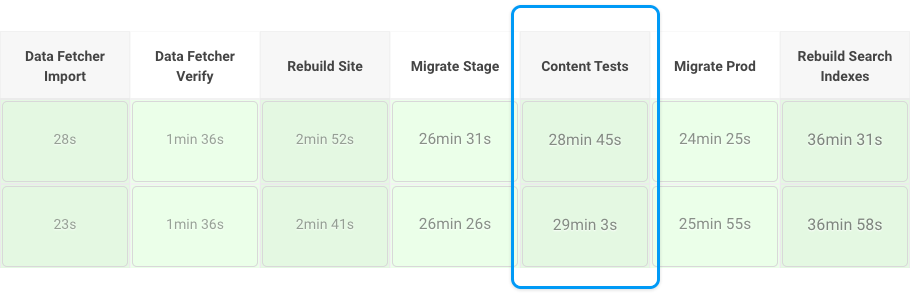

Finally, we knew that implementing the concurrent queue and using JSDOM would result in some amount of speed up in the tests. We weren’t certain before we started how much of an improvement we would see. Getting the content test time under three or four hours seemed reasonable. With everything implemented and tested in Jenkins on the production server, and running with a concurrency of 4, the total content test time is just under 30 minutes to test all 21,000 records. The total build process time is running at about 2 hours. This was an approximately 90% reduction in total build time from our longest builds.

We are very happy with the results of this new JSDOM and Chai combination and are looking forward to the future of our content test pipeline as we look to Drupal 9 and beyond.

Add new comment